Distributed processes fall into two categories: open and closed. Open processes are started in one service and will finish in another after passing through any number of services in between. Closed processes are driven by one particular service from start to finish, and while other services may be involved, the originating service is in overall … Continue reading Open vs Closed Distributed Processes

Category: Opinion

Microservices with AWS Lambda

I've been building microservices for several years. I've mostly used DotNet, DotNet Core, and Ruby on Rails to build them, and I've generally deployed them either into AWS EC2, or Azure Service Fabric. I've found most enterprises aren't ready for managing microservices in containers, either in the cloud or their own data centres. Keeping things … Continue reading Microservices with AWS Lambda

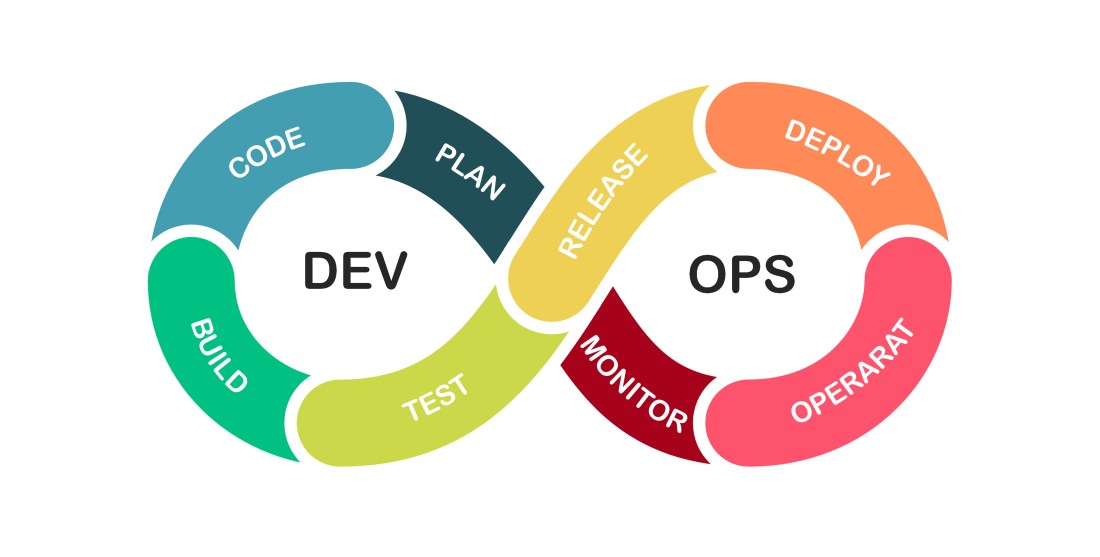

Regarding DevOps

When implemented correctly, DevOps reaches beyond the Development teams and becomes a unifying strategy for the entire enterprise.

Conway’s Shackles?

Does Conway's Law have a dark side?

Managing a Remote Working Team

Let Coding Daddy answer your remote team management woes...

Benefiting from Remote Working

Some of the benefits of remote working.

Testing Times

A dive into the many different ways developers can and should test.

A Refreshing Change

Data refreshes are not the answer.

Automation with Forgerock AM 6.5

Navigating the huge complexity of CI/CD with Forgerock Access Manager 6.5.1.

Where Patterns go to Die

An essay on why software patterns become anti-patterns and how to avoid pattern rot.