You still can't trust AI to do what you expect, and the speed at which it can make changes means a death of a thousand cuts if you don't keep control.

Category: Efficiency

Achieving Software Quality Through Layered Practices

Software quality thrives not on excelling in one area but on maintaining competence across multiple layers of delivery. Each layer acts as a filter, removing around two thirds of defects. Focusing solely on one element, like exhaustive testing, leads to diminishing returns. Collaborative approaches across testing, CI/CD, and requirements prevent issues effectively.

Turning requirements into product

I'm a software engineer at heart - I love writing code, deploying functionality, and seeing the impact it has. Even when I'm just shaving a few seconds off a repetitive task by removing an unnecessary button click - it's all about small improvements, often. So it might be surprising to hear, most problems which impact … Continue reading Turning requirements into product

Sagas and distributed transactions

Controversial opinion I haven't ever had any useful discussion about sagas with anyone at any company I've ever worked with. I've found the people who bring sagas up and make a song and dance about them, are generally the same people who tend to over-engineer solutions and have difficulty 'keeping it simple'. I'd like to … Continue reading Sagas and distributed transactions

Code Reviews Without Pull Requests

I'm going to put my most controversial opinion right out there and wave it around, because I am sick of internal development teams blindly trusting code reviews performed on pull requests. Don't get me wrong. I think there are some very clever UI's provided by most providers (GitHub, Azure DevOps, Bitbucket, etc.) but no matter … Continue reading Code Reviews Without Pull Requests

Delivery Focused Software Teams

Software delivery in many organisations is still far too waterfall, inefficient, and often unhealthy to be a part of. The myriad of methodologies which can be applied in different combinations to achieve something more efficient can be overwhelming. Normal organisational structures deny people the authority to make the efficiency savings which seem logical to them. … Continue reading Delivery Focused Software Teams

Technical Excellence

"Don't overengineer this. We need to move as fast as we can."Too many business representatives The Agile Manifesto is built on 12 pillars, the 9th of which (at the time of writing) is: Continuous attention to technical excellence and good design enhances agility. I regard the Agile Manifesto as a thing of truth. It has … Continue reading Technical Excellence

Open vs Closed Distributed Processes

Distributed processes fall into two categories: open and closed. Open processes are started in one service and will finish in another after passing through any number of services in between. Closed processes are driven by one particular service from start to finish, and while other services may be involved, the originating service is in overall … Continue reading Open vs Closed Distributed Processes

Microservices with AWS Lambda

I've been building microservices for several years. I've mostly used DotNet, DotNet Core, and Ruby on Rails to build them, and I've generally deployed them either into AWS EC2, or Azure Service Fabric. I've found most enterprises aren't ready for managing microservices in containers, either in the cloud or their own data centres. Keeping things … Continue reading Microservices with AWS Lambda

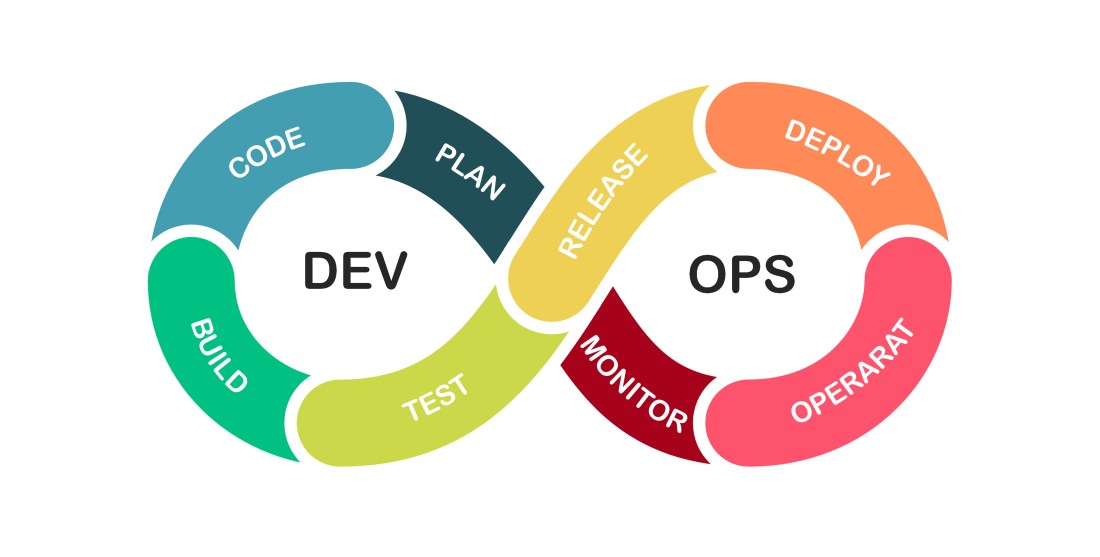

Regarding DevOps

When implemented correctly, DevOps reaches beyond the Development teams and becomes a unifying strategy for the entire enterprise.