I'm a software engineer at heart - I love writing code, deploying functionality, and seeing the impact it has. Even when I'm just shaving a few seconds off a repetitive task by removing an unnecessary button click - it's all about small improvements, often. So it might be surprising to hear, most problems which impact … Continue reading Turning requirements into product

Category: Team Management

Code Reviews Without Pull Requests

I'm going to put my most controversial opinion right out there and wave it around, because I am sick of internal development teams blindly trusting code reviews performed on pull requests. Don't get me wrong. I think there are some very clever UI's provided by most providers (GitHub, Azure DevOps, Bitbucket, etc.) but no matter … Continue reading Code Reviews Without Pull Requests

Delivery Focused Software Teams

Software delivery in many organisations is still far too waterfall, inefficient, and often unhealthy to be a part of. The myriad of methodologies which can be applied in different combinations to achieve something more efficient can be overwhelming. Normal organisational structures deny people the authority to make the efficiency savings which seem logical to them. … Continue reading Delivery Focused Software Teams

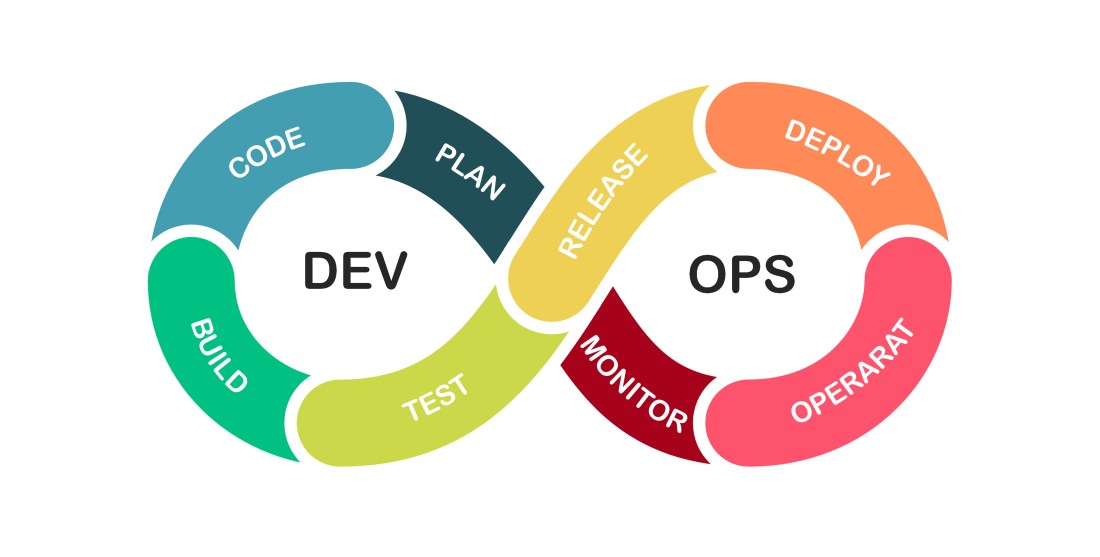

CI/CD

I get so frustrated when I see perfectly talented DevOps engineers building pipelines which drive big bang thinking, and calling it CI/CD. Can you all please stop? Continuous integration and continuous deployment are two very special principals which drive high quality, prevent bugs reaching production, and generally help things get delivered quicker. Automation alone does … Continue reading CI/CD

Technical Excellence

"Don't overengineer this. We need to move as fast as we can."Too many business representatives The Agile Manifesto is built on 12 pillars, the 9th of which (at the time of writing) is: Continuous attention to technical excellence and good design enhances agility. I regard the Agile Manifesto as a thing of truth. It has … Continue reading Technical Excellence

Managing a Remote Working Team

Let Coding Daddy answer your remote team management woes...

Benefiting from Remote Working

Some of the benefits of remote working.

Testing Times

A dive into the many different ways developers can and should test.

Avoiding Delivery Hell

Some enterprises have grown their technical infrastructure to the point where dev ops and continuous deployment are second nature. The vast majority of enterprises are still on their journey, or don't even realise there is a journey for them to take. Businesses aren't generally built around great software development practices - many businesses are set … Continue reading Avoiding Delivery Hell

What’s Slowing Your Business?

There are lots of problems that prevent businesses from responding to market trends as quickly as they'd like. Many are not IT related, some are. I'd like to discuss a few problems that I see over and over again, and maybe present some useful solutions. As you read this, please remember that there are always … Continue reading What’s Slowing Your Business?