Beware – here be dragons!

Over the last year, I’ve become very familiar with Forgerock’s Access Manager platform. Predominantly I’ve been working with a single, manually managed, 13.5 instance, but since experiencing 3 days of professional services from Forgerock, I’ve been busily working on automating AM 6.5.1 using Team City, Octopus, and Ansible. While the approach I’ve taken isn’t explicitly the recommended by Forgerock, it isn’t frowned upon and it is inline with the containerised deployment mechanisms which are expected to become popular with AM v7. I can’t share the source code for what was implemented as it would be a breach of client trust, but given the lack of material available on automating AM (and the shier complexity of the task), I think it’s worth outlining the approach.

Disclaimer alert!

What I cover here is a couple of steps on from what was eventually implemented for my client. The reason being that automating something as complex as Forgerock AM is new for them, as are Ansible Roles, and volatile infra. We went as far as having a single playbook for the AM definition, and we had static infra – the next logical step would be to break down into roles and generate the infra with each deploy.

I’ve already been through the pain of distilling non functional requirements down to a final approach, I feel it would be easier here to start at the end. So let’s talk implementation.

Tech Stack

The chosen tech stack was driven by what was already in use by my client. The list is augmented with some things we felt pain for missing.

Code repositories: git in Azure DevOps

Build platform: Team City

Deployment platform: Octopus

Configuration management: Ansible

Package management: JFrog Artifactory

Local infra as code tool: Vagrant

A few points I’d like to make about some of these:

- Azure DevOps looks really nice, but has an appalling range of thousands of IP addresses which need to be whitelisted in order to use any of the hosted build / deploy agents. The problem goes away if you self host agents, but it’s a poor effort on the part of Microsoft.

- Octopus isn’t my preferred deployment tool. I find Octopus is great for beginners, but it lends itself to point and click rather than versioning deploy code in repos. It’s also very over-engineered and opinionated, forcing their concepts onto users. My personal preference is Thoughtworks’ Go Deploy which takes the opposite appraoch.

- You don’t need to use Vagrant for local development, I only call it out here because I believe it can help speed things up considerably. It’s possible to execute Ansible playbooks via the Vagrantfile, or (my preference) write a bash script which can be used manually, via Ansible, or from virtually any other platform.

- I don’t have huge amounts of experience with Ansible, but it seems to do the job pretty well. I’m sure I probably missed a few tricks in how I used it.

Architecture

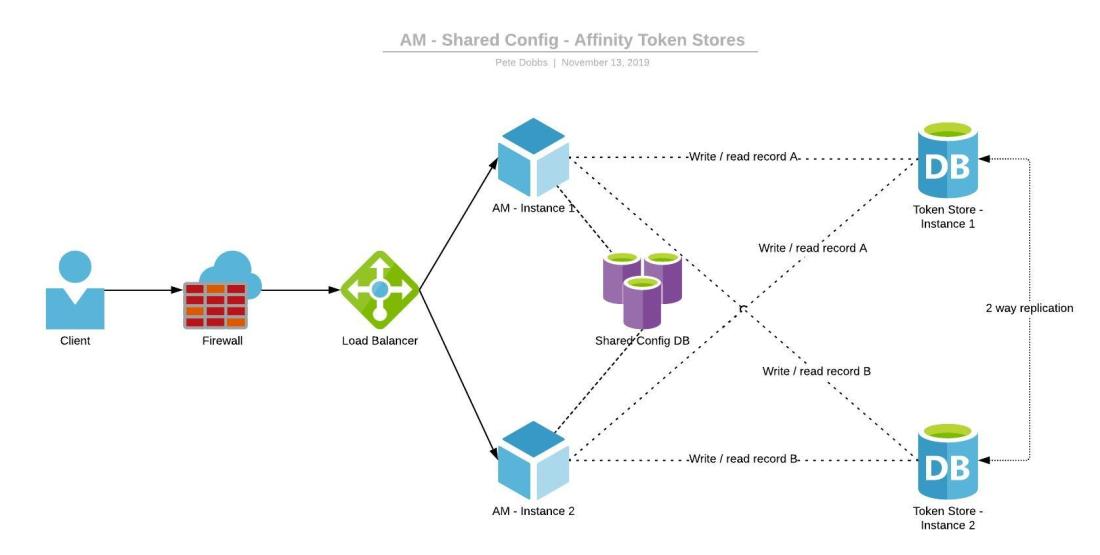

Generally, with a multi-node deployment of Forgerock AM, we end up with something looking like fig. 1.

There are two items to note about this configuration:

- The shared config database means ssoadm/Amster commands only need executing against one instance. The other instance then just needs restarting to pick up the config which has been injected into the config database.

- Affinity is the name for the mechanism AM uses to load balance the token stores without risking race conditions and dirty reads. If a node writes a piece of data to token store instance 1, then every node will always go back to instance 1 for that piece of data (failing over to other options if instance 1 is unavailable). This helps where replication takes longer than the gap between writing and reading.

Affinity rocks. Until we realised this was available, there was a proxy in front of the security token stores set to round robin. If you tried to read something immediately after writing, you’d often get a dirty read or an exception. Affinity does away with this by deciding where the data should be stored based on a hash of the data location which all nodes can calculate. Writes and reads from every node will always go to the same STS instance first.

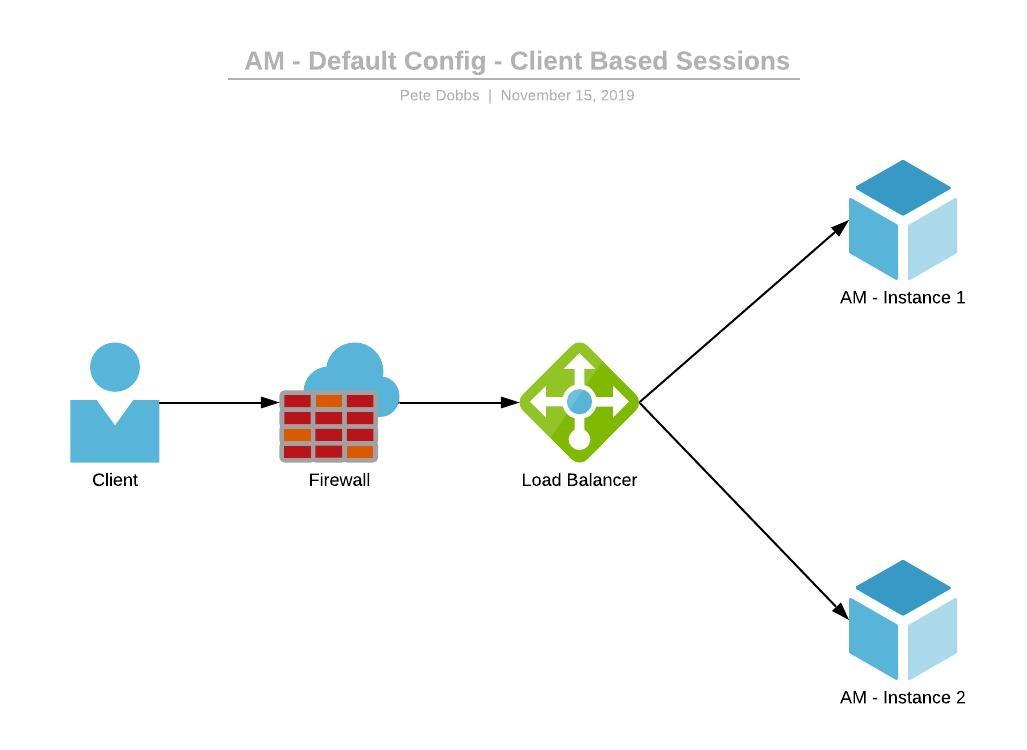

For my purposes, I found that the amount of data I needed to store in the user’s profile was tiny; I had maybe two properties. Which led me down the path of trying to use client based sessions to store the profile. The benefit of this approach is that we don’t really need any security token stores. Our architecture ends up looking like fig. 2.

We don’t just do away with the token stores. Because we are fully automating the deployment, we don’t need to share a config database – we know our config is aligned because it is recreated with every deploy exactly as it is in the source code.

Keys

Ok, so it isn’t quite as easy as that. Because we aren’t sharing config, we can’t allow the deploy process to pick a random encryption keys. These keys are used to encode session info, security tokens, and cookies. To align these we need to run a few commands during deployment.

set-attr-defs --verbose --servicename iPlanetAMSessionService -t global -a "openam-session-stateless-signing-rsa-certificate-alias=<< your cert alias >>" set-attr-defs --verbose --servicename iPlanetAMSessionService -t global -a "openam-session-stateless-encryption-rsa-certificate-alias=<< your cert alias >>" set-attr-defs --verbose --servicename iPlanetAMSessionService -t global -a "openam-session-stateless-encryption-aes-key=<< your aes key >>" set-attr-defs --verbose --servicename iPlanetAMSessionService -t global -a "openam-session-stateless-signing-hmac-shared-secret=<< your hmac key >>" set-attr-defs --verbose --servicename iPlanetAMAuthService -t organization -a "iplanet-am-auth-hmac-signing-shared-secret=<< your hmac key >>" set-attr-defs --verbose --servicename iPlanetAMAuthService -t organization -a "iplanet-am-auth-key-alias=<< your cert alias >>" set-attr-defs --verbose --servicename RestSecurityTokenService -t organization -a "oidc-client-secret=<< your oidc secret >>"

These settings are ssoadm commands mostly found in this helpful doco, but I think I had to dig a bit further for one or two. Some of these have rules over minimum complexity. The format I’ve given is how they would appear if you are using the ssoadm do-batch command to run a number of instructions via batch file.

SAML gotcha

To make client based profiles work for SAML authentication, I was surprised to find that I needed to write a couple of custom classes.

SAML auth isn’t something we wanted to do, but we were forced down this route due to limitations of another 3rd party platform.

I started off with this doco from Forgerock, and with Forgerock’s am-external repo. With some debug level logging, I was able to find that I needed to create a custom AccountMapper and a custom AttributeMapper. It seems that both of the default classes were coded to expect the profile to be stored in a db, regardless of whether client sessions were enabled or not. Rather than modifying the existing classes, I added my own classes to avoid breaking anything else which might be using them.

Referencing the new classes is annoyingly not well documented. Firstly, build the project and drill down into the compiled output (I just used the two .class files created for my new classes) – copy over to the war file in WEB-INF/lib/openam-federation-library.jar. Make sure you put the .class files in the right location. I managed to reference these classes in my ‘identityprovider.properties’ file with these xml elements:

<Attribute name="idpAccountMapper">

<Value>com.sun.identity.saml2.plugins.YourCustomAccountMapper</Value>

</Attribute>

<Attribute name="idpAttributeMapper">

<Value>com.sun.identity.saml2.plugins.YourCustomAttributeMapper</Value>

</Attribute>

As code

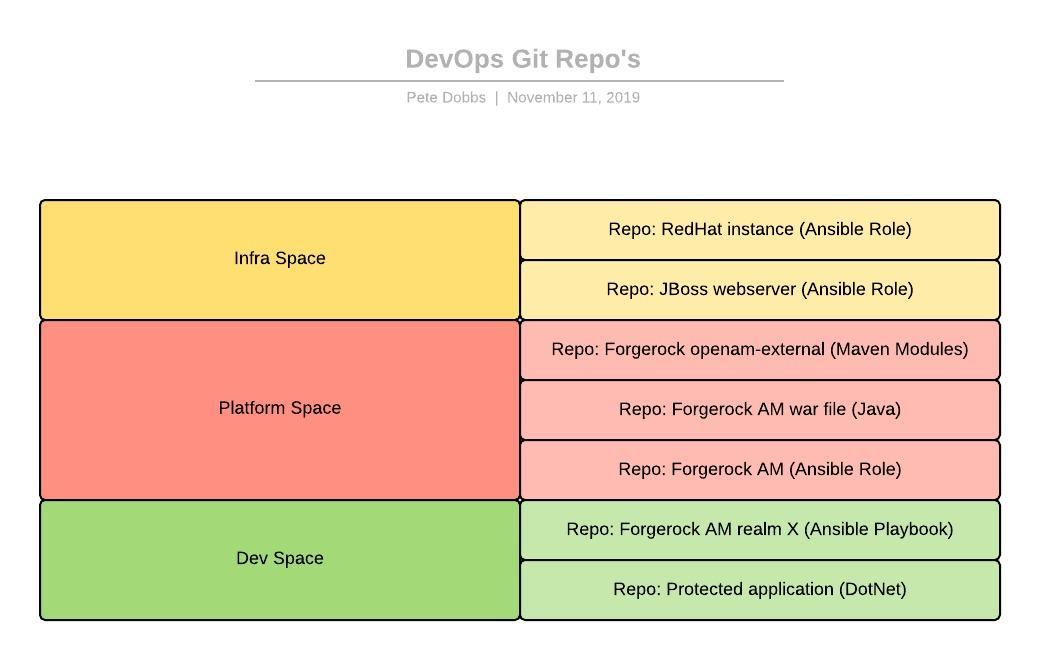

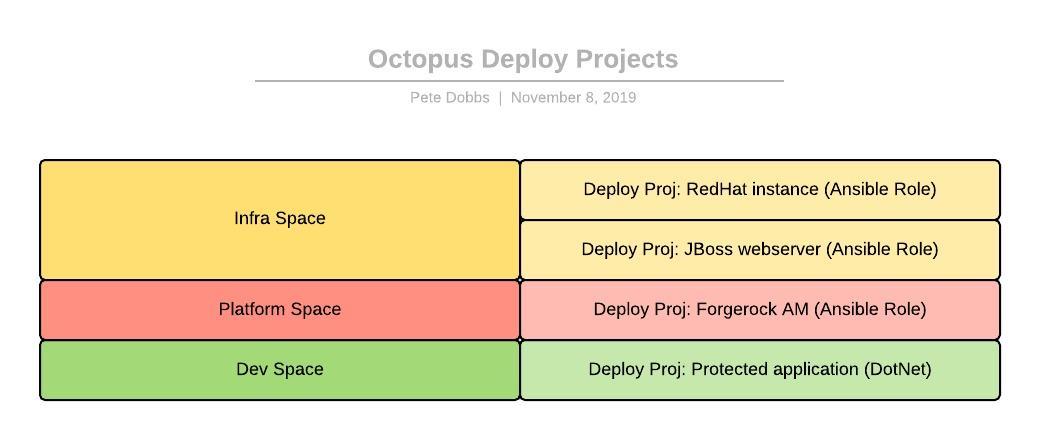

To fully define the deployment of AM in code which I ended up with, we can use the git repositories shown in fig.3.

Infra space

Hopefully you’re using fully volatile instances, and creating/destroying new webservers all the time. If you are, then this should make some sense. There’s a JBoss webserver role which references a RedHat server role. These can be reused by various deployments and they’re configured once by the Infra team.

I’m not going to go into much detail about these, as standards for building instances will change from place to place.

We didn’t have fully volatile infra when I implemented AM automation, which meant it was important to completely remove every folder from the deploy before re-deploying. While developing I’d often run into situations where a setting was left over from a previous run and would fail on a new instance.

Platform space

The Platform Space is about managing the 3rd party applications that support the enterprise. This space owns the repo for customising the war file, a copy of the am-external repo from Forgerock, and the Ansible Role defining how to deploy a totally vanilla instance of AM – this references the JBoss webserver role. These are all artifacts which are needed in order to just deploy the vanilla, reusable Forgerock AM platform without any realms.

Dev space

The Dev Space should be pretty straight forward. It contains a repo for the protected application, and a repo for the AM Realm to which the application belongs. The realm definition is an Ansible Playbook, rather than a role. It’s a playbook because there isn’t a scenario where it would be shared. Also, although ansible-galaxy can be used to download the dependencies from git, it doesn’t execute them, you still need a point of entry for running the play and its dependencies, which can be just a playbook. One of the files in the playbook should be a requirements.yml, which is used to initiate the chain of dependencies through the other roles (mentioned below in a little more detail).

Repo: Forgerock AM war file

My solution structure for building the war file looks like this:

- root

- warFile

- customCode

- code

- tests

- amExternal

- xui

- openam-ui-api

- openam-ui-ria

- staticResources

We can go through each of these subfolders in turn.

warFile

The war file is an unzipped copy of the official file, downloaded from here. I was using version 6.5.1 of Access Manager. This folder is a Maven project configured to output a .war file. Before compiling this war file, we need to pull in all the customisations from the rest of the solution.

customCode

This is (unsurprisingly) for custom Java code, built against the Forgerock AM code. The type of things you might find here would be plugins, auth nodes, auth modules, services, and all sorts of other points of extension where you can just create a new ‘thing’ and reference it by classname in the realm config.

Custom code is pretty straight forward. New libraries are the easiest to deal with as you’re writing code to interface. You compile to a jar and copy that jar into the war file under /WEB-INF/lib/ along with any dependencies. As long as you are careful with your namespaces and keep an eye on the size of what you’re writing, you can probably get away with just building a single jar file for all your custom code. This makes things easier for you in the sense that you can do everything in one project, right along-side an unzipped war file. If you start to need multiple jars to break down your code further, consider moving your custom code to a different repo, and hosting jars on an internal Maven server.

Because you are writing new code here, there is of course the opportunity to add some unit tests, and I suggest you do. I found that keeping my logic out of any classes which implement anything from Forgerock was a good move – allowing me to test logic without worrying about how the Forgerock code hangs together. This is probably sage advice at any time on any other platform, as well.

Useful link: building custom auth nodes (may require a Forgerock account to access)

amExternal

The code in am-external is a little trickier. This repo from Forgerock has around 50 modules in it, and you’ll probably only want to recompile a couple. I’m not really a Java developer so rather than try to get every module working, I elected for creating my own git repo with am-external in it, keeping a track of customisations in the git history and in README.md. Then manually copying the recompiled jars over into my war file build. I placed these compiled jars into the amExternal folder, with a build script which simply copied them into /WEB-INF/lib/ before the war file is compiled.

xui

This is (in my opinion) a special case from am-external, a module called openam-ui. We already had XUI customisations from a while ago, otherwise I would probably not be bothering with XUI. From my own experience and having discussed this during some on-site Forgerock Professional Services, XUI is a pretty clunky way to do things. The REST API in AM 6.5+ is excellent, you can easily consume it from your own login screen.

For previous versions of AM, it’s been possible just to copy the XUI files into the war file before compilation, but now we have to use the compiled output.

Instructions for downloading the am-external source and the XUI are here. New themes can be added at: /openam-ui-ria/src/resources/themes/ – just copy the ‘dark’ folder and start from there. There are a couple of places where you have to add a reference to the new theme, but the above link should help you out with that as well.

This module needs compiling at openam-ui, and the output copying into the war file under the /XUI folder.

staticResources

We included a web.xml and a keepAlive.jsp as non-XUI resources. I found a nice way to handle these is to recreate the warFile structure in the staticResources folder, add your files there, and use a script to copy the entire folder structure recursively into the war file while maintaining destination files.

Some of these (amExternal and staticResources) could have been left out, and the changes made directly into the war file. I didn’t do this for two reasons:

- The build scripts which copy these files into place explain to any new developers what’s going on far better than a git history would.

- By leaving the war file clean (no changes at all since downloading from Forgerock), I can confidently replace it with the next version and know I haven’t lost any changes.

The AM-SSOConfiguratorTools

The AM-SSOConfiguratorTools-{version}.zip file can be downloaded from here. The version I was using is 5.1.2.2, but you will probably want the latest version.

Push this zip into Artifactory, so it can be referenced by the Ansible play which installs AM.

You have a choice to make here about how much installation code lives with the Ansible play, and how much is in the Configurator package you push to Artifactory. There are a number of steps which go along with installing and using the Configurator which you might find apply to all usages, in which case I would tend to add them to the Configurator package. These steps are things like:

- Verify the right version of the JDK is available (1.8).

- Unzip the tools.

- Copy to the right locations.

- Apply permissions.

- Add certificates to the right trust store / copy your own trust store into place.

- Execute the Configurator referencing the install config file (which will need to come from your Ansible play, pretty much always).

Repo: Forgerock AM (Ansible Role)

This role has to run the following (high level) steps:

- Run the JBoss webserver role.

- Configure JBoss’ standalone.xml to point at a certificate store with your SSL cert in it.

- Grab the war file from Artifactory and register it with JBoss.

- Pull the Configurator package from Artifactory.

- Run the Configurator with an install config file from the Role.

- Use the dsconfig tool to allow anonymous access to Open DS (if you are running the default install of Open DS).

- Add any required certs into the Open DS keystore (/{am config directory}/opends/config/keystore)

- Align passwords on certs using the keystore.pin file from the same directory.

More detailed install instructions can be be found here.

I ran into a lot of issues while trying to write an install script which would work. Googling the problems helped, but having a Forgerock Backstage account and being able to ask their support team directly was invaluable.

A lot of issues were around getting certificates into the right stores, with the correct passwords. You need to take special care to make sure that Open DS also has access to the right SSL certs and trust stores.

Repo: Forgerock AM Realm X (Ansible Playbook)

Where ‘X’ is just some name for your realm. With AM 6.5+ you have a few options for configuring realms: ssoadm, amster, and the REST API. As I already had a number of scripts built for ssoadm from another installation, I went with that. With the exception of a custom auth tree, which ssoadm doesn’t know about. For these you can use either Amster or the REST API, but at the time I was working on this there was a bug in Amster which meant Forgerock were suggesting the REST API was the best choice.

For reference on how to use the command line tools and where to put different files, see here.

Running ssoadm commands one at a time to build a realm is very slow. Instead use the do-batch command, referenced here.

DevOps

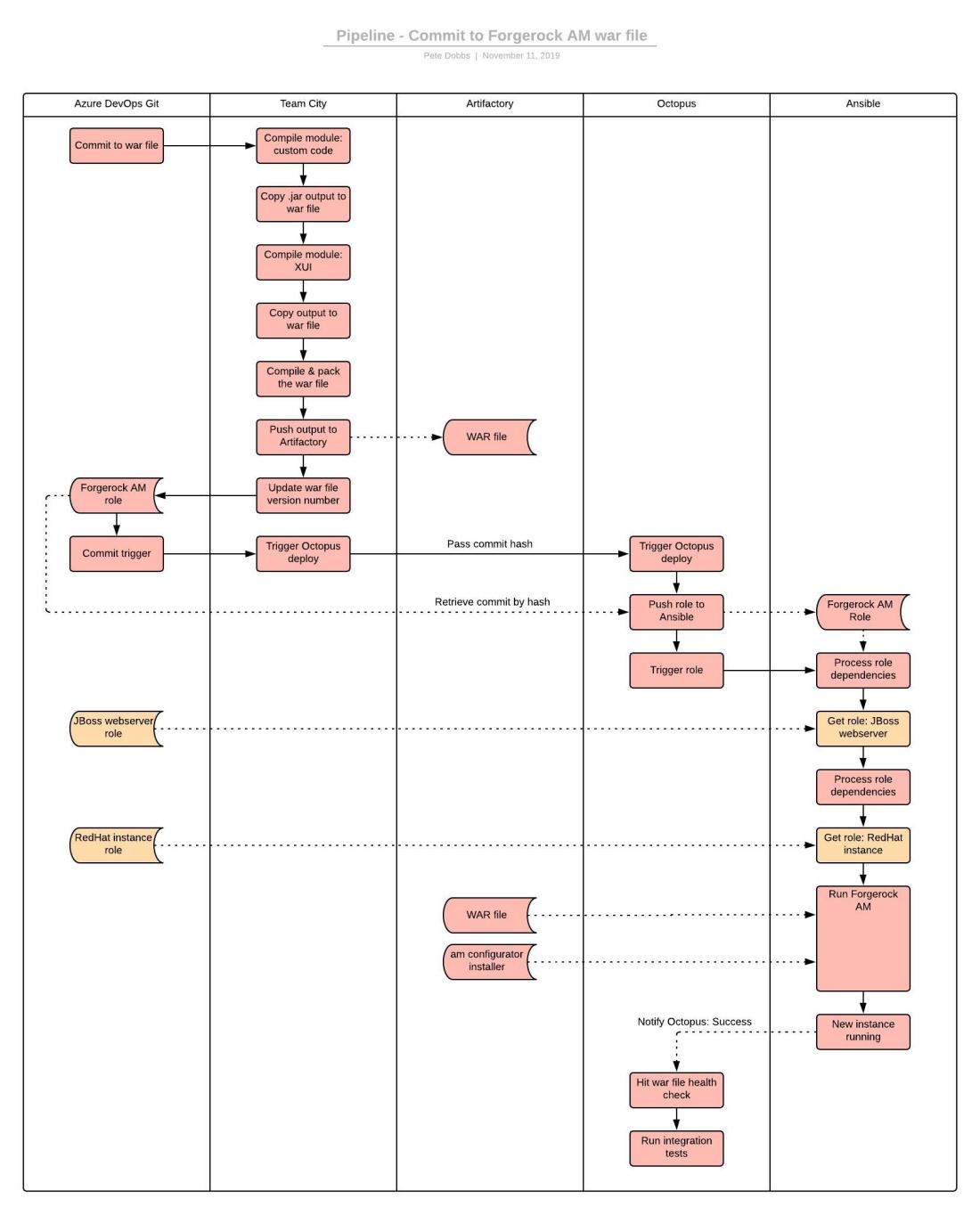

Our DevOps tool chain is git, Team City, Octopus, Ansible, and Artifactory. These work together well, but there are some important concepts to allow a nice separation between Dev teams and Platform/Infra teams.

Firstly, Octopus is the deployment platform, not Ansible. Deploying in this situation can be defined as moving the configuration to a new version, and then verifying the new state. It’s the Ansible configuration which is being deployed. Ansible maintains that configuration. When Ansible detects a failure or a scaling scenario, and brings up new instances, it doesn’t need to run the extensive integration tests which Octopus would, because the existing state has been validated already. Ansible just has to hit health checks to verify the instances are in place.

Secondly, developers should be building deployable packages for their protected applications and registering them in Artifactory. This means the Ansible play for a protected application is just ‘choco install blah’ or ‘apt install something’. It also means the developers are somewhat isolated from the in’s and out’s of Ansible – they can run their installer over and over without ever thinking about Ansible.

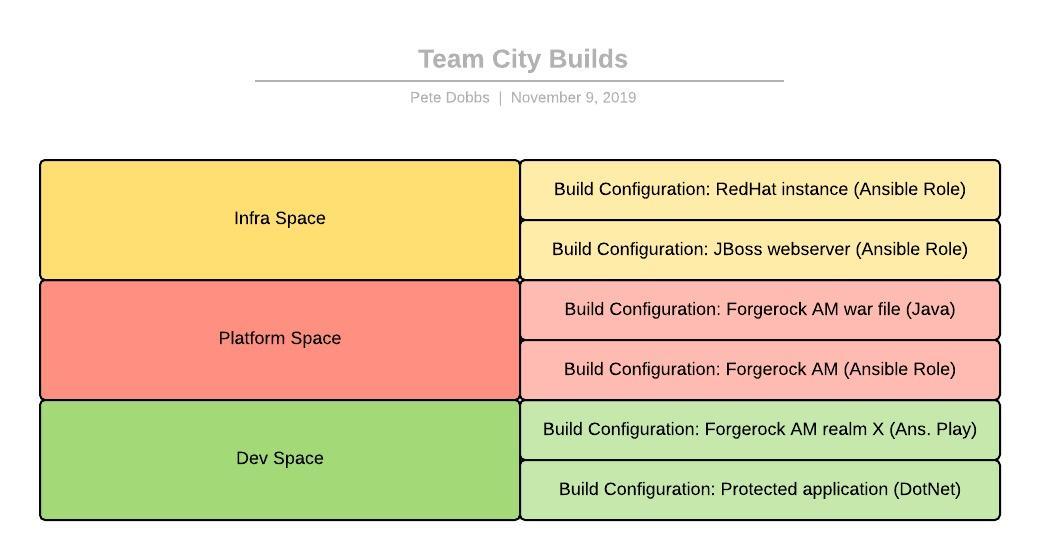

Fig. 4 and fig. 5 show the Team City builds and the Octopus Deploy jobs.

Notice that the Forgerock AM Realm X repo is an Ansible Playbook rather than a Role. The realm is “the sharp end”, there will only ever be a single realm X, so there’s never going to be a requirement to share it. We can package this playbook up and push it to Artifactory so it can be ‘yum installed’. We can include a bash script to process the requirements.yml (which installs the dependent roles and their dependencies) and then execute the play. The dependencies of each role (being one of the other roles in each case) are defined in a meta/main.yml file as explained here.

The dev owned deploy project for the protected application includes the deployment of the entire stack. In this case that means first deploying the Ansible playbook for AM realm X, which will reference the Forgerock AM role (and so its dependencies) to build the instance. A cleverly defined deploy project might deploy both the protected application and the AM realm role simultaneously, but fig. 6 shows the pipeline deploying one, then the other.

Now, I am not an Ansible guru by any stretch of the imagination. I’ve enjoyed using Chef in the past, but more often I find companies haven’t matured far enough to have developed an appetite for configuration management, so it happens that I haven’t had any exposure to Ansible until this point. Please keep in mind that I might not have implemented the Ansible components in the most efficient way.

Roles vs playbooks

- Roles can be pulled directly from git repositories by ansible-galaxy. Playbooks have to be pushed to an Ansible server to run them.

- Roles get versioned in and retrieved from a git repo’s. Playbooks get built and deployed to a package management platform such as Ansible.

- Roles are easily shareable and can be consumed from other roles or playbooks. Playbooks reference files which are already in place on the Ansible server, either as part of the play or downloaded by ansible-galaxy.

- An application developer generally won’t have the exposure to Ansible to be able to write useful roles. An application developer can pretty quickly get their head around a playbook which just installs their application.

- My view of the world!

Connecting the dots

Take a look at an example I posted to github recently, where I show a role and a playbook being installed via Vagrant. The playbook is overly simplified, without any variables, but it demonstrates how the ‘playbook to role to role…’ dependency chain can be initiated with only three files:

- configure.sh

- requirements.yml

- test_play.yml

Imagine if these files were packaged up and pushed to Artifactory. That is my idea of what would comprise the ‘Realm X Playbook’ package in fig. 6. Of course the test_play.yml would actually be a real play with templates that set up the realm and with variables for each different environment. I hope that diagram easier to trace, with this in mind.

Developer exposure

The only piece of Forgerock AM development which the dev team are exposed to is the realm definition. This is closely related with the protected application, so any developers working on authentication need to understand how the realm is set up and how to modify it. Having the dev team own the realm playbook helps distribute understanding of the platform.

Working in the platform and infra space

The above is just the pipeline for the protected application, every other repo triggers a pipeline as well. fig. 7 shows what happens when there’s a commit to the war file repo.

Ultimately, the platform and infra QA pipelines (I call them QA pipelines because they’re there purely for testing) are kicked off with commits to the role repo’s. It’s probably a good idea to agree a reasonable branching strategy so master is always prod ready, because it could go at any time!

The next step for the pipeline in fig. 7 might be to kick off a deploy of all protected applications consuming that role. This might present a scoping and scale problem. If there are 30 applications being protected by Forgerock AM and a deploy is kicked off for every one of these at the same time into the same test environment, you may see false failures and it may take a LONG time to verify the change. Good CI practice would suggest you integrate and test as early as possible, but if the feedback loop is unpreventably long, then you probably won’t want to kick it off with each commit at 2 minutes apart.

The risk is that changes may build up before you have a chance to find out that they are wrong – mitigate it in whatever way works best for you. The right answer will depend on your own circumstances.

I think that’s it

That pretty much covers everything I’ve had to implement or work around when putting together CI/CD automation for Forgerock Access Manager. Reading back through, I’m struck by how many tiny details need to be taken into consideration in order to make this work. It has been a huge effort to get this far, and yet I know this solution is far from complete – blue/green and rollbacks would be next on the agenda.

I think with the release of version 7, this will get much easier as they move to containers. That leaves the cluster instance management to Ansible and container orchestration to something like Kubernetes – a nice separation of concerns.

Even with containers, AM is still a hugely complicated platform. I’ve worked with it for just over a year and I’m struggling to see the balance of cost vs benefit. I wrote this article because I wanted to show how much complexity there really was. I think if I had read this at the beginning I would have been able to estimate the work better, at least!

Even with the huge complexity, it’s worth noting that this is now redeploying reliably with every commit. It’s easy to see all the moving parts because there is just a single deploy pipeline which deploys the entire stack. Ownership of each different component is nicely visible due to having the repo’s in projects owned by different teams.

A success story?