Although I see developers writing more and more tests, their efforts are often ignored by QA and not taken into account by the test strategy. It’s common for developers to involve QA in their work, but this is not the full picture. To maximise on efficiency of test coverage, I think developer tests should be accounted for as part of the test approach.

The role of QA as the gateway of quality, rather than the implementors of quality, allows for good judgement to be used when deciding if a developer’s tests provide suitable coverage to count toward the traditional QA effort. This requires QA to include some development skills, so the role is capable of seeing the benefits and flaws in developer written tests.

What follows is a breakdown of how I categorise different types of test, followed by a “bringing it all together” section where I hope to outline a few approaches for streamlining the amount of testing done.

Unit Tests

Unit testing can take the form of a few different flavours. I’ve noticed some difference depending on language and platform. There are also different reasons for writing unit tests.

Post development tests

These are the unit tests developers would write before TDD became popular. They do have a lot of value. They are aimed at ensuring business logic does what it’s supposed to at the time of development, and keeps on working at the time of redevelopment five years later.

TDD

I fall into the crowd who bleieve TDD is more about code design than it is about functional correctness. Taking a TDD approach will help a developer write SOLID code, and hopefully make it much easier to debug and read. Having said that, it’s a fantastic tool for writing complex business logic, because generally you will have had a very kind analyst work out exactly what the output of your complex business logic is expected to be. Tackling the dev with a TDD approach will make translating that logic into code much easier, as it’s immediately obvious when you break something you wrote 2 minutes ago.

BDD

I’m a big believer in BDD tests being written at the unit level. For me, these are tests which are named and namespaced in a way to indicate the behaviour being tested. They will often have most of the code in a single setup method and test the result of running the setup in individual test methods, named appropriately. These can be used in the process of TDD, to design the code, and they can also be excellent at making a connection between acceptance criteria and business logic. Because the context of the test is important, I find there’s about a 50/50 split of when I can usefully write unit tests in a BDD fashion vs working with a more general TDD approach. I’ve also found that tests like these can encourage the use of domain terminology from the story being worked on, as a result of the wording of the AC’s.

Without a doubt, BDD style unit tests are much better at ensuring important behaviours remain unbroken than more traditional class level unit tests, because the way they’re named and grouped makes the purpose of the tests, and the specific behaviour under test, much clearer. I can tell very quickly if a described behaviour is important or not, but I can’t tell you whether the method GetResult() should always return the number 5. I would encourage this style of unit testing where business logic is being written.

However! BDD is not Gherkin. BDD tests place emphasis on the behaviour being tested rather than just on the correctness of the results. Don’t be tied to arbitrarily writing ‘given, when, then’ statements.

Hitting a database

The DotNet developer in me screams “No!” whenever I think about this, but the half of me which loves Ruby on Rails understands that because MySQL is deployed with a single ‘apt’ command and because I need to ensure my dynamically typed objects save and load correctly, hitting the db is a really good idea. My experience with RoR tells me that abstracting the database is way more work than just installing it (because ‘apt install mysql2’ is far quicker to write than any number of mock behaviours). In the DotNet world, you have strongly typed objects, so cheking that an int can be written to an integer column in a database is a bit redundant.

Yes, absolutely, this is blatantly an integration test. When working with dynamic languages (especially on Linux) I think the dent in the concept is worth the return.

Scope of a unit test

This is important, because if we are going to usefully select tests to apply toward overall coverage, we need to know what is and isn’t being tested. There are different views on unit testing, on what does and doesn’t constitute a move from unit testing to integration testing, but I like to keep things simple. My view is that if a test crosses application processes, then it’s an integration test. If it remains in the application you are writing (or you’re hitting a db in RoR) and doesn’t require the launching of the application prior to test, then it’s a unit test. That means unit tests can cover a single method, a single class, a single assembly, or multiple of all or any of these. Pick whatever definition of ‘a unit’ works to help you write the test you need. Don’t be too constrained on terminology – if you need a group of tests to prove some functionality which involves half a dozen assemblies in your application, write them and call them unit tests. They’ll run after compile just fine. Who cares whether someone else’s idea of a ‘unit’ is hurt?

Who writes these?

I really hope that it’s obvious to you that developers are responsible for writing unit tests. In fact, developers aren’t only responsible for writing unit tests, they’re responsible for realising that they should be writing unit tests and then writing them. A developer NEVER has to ask permission to write a unit test, any more than they need permission to turn up to work. This is a part of their job – does a pilot need to ask before extending the landing gear?

How can QA rely on unit tests?

Firstly, let’s expel the idea that QA might ‘rely’ on a unit test. A unit test lives in the developer’s domain and may change at any moment or be deleted. As is so often the case in software development, the important element is the people themselves. If a QA and a dev work together regularly and the QA knows there are unit tests, has even seen them and understands how the important business logic is being unit tested, then that QA has far greater confidence that the easy stuff is probably OK. Hopefully QA have access to the unit test report from each build, and the tests are named well enough to make some sense. With this scenarion, it’s easier to be confident that the code has been written to a standard that is ready for a QA to start exposing it to real exploratory “what if” testing, rather than just checking it meets the acceptance criteria. Reasons for story rejection are far less likely to be simple logic problems.

Component Tests

I might upset some hardware people, but in my mind a component test is ran against your code when it is running, but without crossing application boundaries downstream. So if you have written a DotNet Web API service, you would be testing the running endpoint of that service while intercepting downstream requests and stubbing the responses. I’ve found Mountebank to be an excellent tool for this, but I believe there are many more to choose from.

TDD and BDD

Component tests can be ran on a developer’s machine, so it’s quite possible for these to be useful in a TDD/BDD fashion. The downside is that the application needs to be running in order to run the tests, so if they are to be executed from a build server, then the application needs to be started and the stubbing framework set up – this can be trickier to orchestrate. As with any automation, this is only really tricky the first time it’s done. After that, the code and patterns are in place.

In my experience, component tests have limited value. I’ve found that this level of testing is often swallowed by the combination of good unit testing and good integration testing. From a point of view, they are actually integration tests, as you are testing the integration between the application and the operating system.

Having said that, if the downstream systems are not available or relilable, then this approach allows the application functionality to be tested seperately from any integrations.

Who writes these?

Developers write component tests. They may find that they are making changes to an older application, which thiey don’t fully understand. Being able to sandbox the running app and stub all external dependencies can help in these situations.

How can QA rely on component tests?

This again comes down to the very human relationship between the QA and the developer. If there is a close working relationship, and the developer takes the time to show the QA the application under test and explain why they’ve tested it in that way, then it increases the confidence the QA has that the code is ready for them to really go to town on it. It might be that in a discussion prior to development, the QA had suggested that they would be comfier if this component test existed. The test could convey as much meaning as the AC’s in the story.

Again, quality is ensured by having a good relationship between the people involved in building it.

Application Scoped Integration Tests

Integration tests prove that the application functions correctly when exposed to the other systems it will be talking to once in production. They rely on the application being installed and running, and they rely on other applications in ‘the stack’ also running. Strictly speaking, any test that crosses application boundaries is an integration test, but I want to focus on automated tests. We haven’t quite got to manual testing yet.

TDD and BDD

With an integration test, we are extending the feedback time a little too far for it to be your primary TDD strategy; you may find it useful to write an integration test to show a positive and negative result from some business logic at the integration level before that logic is deployed just so you can see the switch from failing to passing tests, but you probably shouldn’t be testing all boundary results at an integration level as a developer. It’s definitely possible to write behaviour focussed integration tests. If you’re building an API and the acceptance criteria includes a truth table, you pretty much have your behvaviour tests already set out for you, but consider what you are testing at the integration level – if you have unit tests proving the logic, then you only need to test that the business logic is hooked in correctly.

The difficult part of this kind of testing often seems to be setting up the data in downstream systems for the automated tests. I find it difficult to understand why anyone would design a system where test data can’t be injected at will – this seems an obvious part of testable architecture; a non-functional requirement that keeps getting ignored for no good reason. If you have a service from which you need to retrieve a price list, that service should almost certainly be capable of saving price list data sent to it in an appropriate way; allowing you to inject your test data.

Scope

The title of this section is ‘Application Scoped Integration Tests’ – my intention with that title is to draw a distinction between tests which are intended to test the entire stack and tests which are intended to test the application or service you are writing at the time. If you have 10 downstream dependencies in your architecture, these tests would hit real instances of these dependencies but you are doing this to test the one thing you are building (even though you will generally catch errors from further down the stack as well).

Who writes these?

These tests are still very closely tied with the evolution of an application as it’s being built, so I advocate for developers to write these tests.

How can QA rely on application scoped integration tests?

Unit and component tests are written specifically to tell the developer that their code works; integration tests are higher up the test pyramid. This means they are more expensive and their purpose should be considered carefully. Although I would expect a developer to write these tests alongside the application they are building, I would expect significant input from a QA to help with what behaviours should and shouldn’t be tested at this level. So we again find that QA and dev working closely gives the best results.

Let’s consider a set of behaviours defined in a truth table which has different outcomes for different values of an enum retrieved from a downstream system. The application doesn’t have control over the vaules in the enum; it’s the downstream dependency that is returning them, so they could conceivably change without the application development team knowing it. At the unit test level, we can write a test to prove every outcome of the truth table. At the integration level, we don’t need to re-write those tests, but we do need to verify that the enum contains exactly the values we are expecting, and what happens if the enum can’t be retrieved at all.

Arriving at this approach through discussion between QA and Dev allows everyone to understand how the different tests at different levels compliment each other to prove the overall functionality.

Consumer Contracts

These are probably my favourite type of test! Generally written for APIs and message processors, these tests can prevent regression issues caused by services getting updated beyond the expectation of consumers. When a developer is writing something which must consume a service (whether via synchronous or asynchronous means) they write tests which execute against the downstream service to prove it behaves in a way that the consumer can handle.

For example: if the consumer expects a 400 HTTP code back when it POSTs an address with no ‘line 1’ field, then the test will intentionally POST the invalid address and assert that the resulting HTTP code is 400. This gets tested because subsequent logic in the consumer relies on having received the 400; if the consumer didn’t care about the response code then this particular consumer contract wouldn’t include this test.

The clever thing about these tests is when they are ran: the tests are given to the team who develop the consumed service and are ran as part of their CI process. They may have similar tests from a dozen different consumers, some testing the same thing, the value is that it’s immediately obvious to the service developers who relies on what behaviour. If they break something then they know who will be impacted.

Scope

This is the subject of some disagreement. There is a school of thought which suggests nothing more than the shape of the request, the shape of the response, and how to call the service should be tested; beyond that should be a black box. Personally, I think that while this is probably correct most of the time, there should be some wiggle room, depending on how coupled the services are. If a service sends emails, then you might want to check for an ’email sent’ event being raised after getting a successful HTTP response (even though the concept of raising the event belongs to the emailer service) – the line is narrow, testing too deep increases coupling but all situations are different.

Who writes these?

These are written by developers and executed in CI.

How can QA rely on consumer contracts?

Consumer contracts are one of the most important classes of tests. If the intention is ever to achieve continuous delivery, these types of test will become your absolute proof that a change to a service hasn’t broken a consumer. Because they test services, not UI, they can be automated and all encompassing but they might not test at a level that QA would normally think about. To get a QA to understand these tests, you will probably have to show how they work and what they prove. They will execute well before any code gets to a ‘testing’ phase, so it’s important for QA to understand the resilience that the practice brings to distributed applications.

Yet again we are talking about good communication between dev and QA as being key to proving the tests are worth taking into consideration.

Stack Scoped Integration Tests

These are subtley different from application scoped integration tests.

There are probably respected technologists out there who will argue that these are the same class of test. I have seen them combined and I have seen them adopted individually (or more often not adopted at all) – I draw a destinction because of the different intentions behind writing them.

These tests are aimed at showing the interactions between the entire stack are correct from the point of view of the main entry point. For example, a microservice may call another service which in turn calls a database. A stack scoped test would interact with the first microservice in an integrated environment and confirm that relevant scenarios from right down the stack are handled correctly.

TDD and BDD

You would be forgiven for wondering how tests at such a high level can be included in TDD or BDD efforts; the feedback loop is pretty long. I find these kind of tests are excellent for establishing behaviours around NFRs, which are known up front so failing tests can be put in place. These are also great at showing some happy path scenarios, while trying to avoid full on boundary testing (simply because the detail of boundary values can be tested much more efficiently at a unit level). It might be worth looking at the concept of executable specifications and tools such as Fitnesse – these allow behaviours to be defined in a heirarchical wiki and linked directly to both integration and unit tests to prove they have been fulfilled. It’s an incredibly efficient way to produce documentation, automated tests, and functioning code at the same time.

Scope

Being scoped to the stack means that there is an implicit intention for these tests to be applied beyond the one service or application. We are expecting to prove integrations right down the stack. This also means that it might not be just a developer writing these. If we have a single suite of stack tests, then anyone making changes to anything in the stack could be writing tests in this suite. For new features, it would also be efficient if QA were writing some of these tests; this can help drive personal integration between dev and QA, and help the latter get exposure to what level of testing has already been applied before it gets anywhere near a classic testing phase.

These tests can be brittle and expensive if the approach is wrong. Testing boundary values of business logic at a stack level is inefficient. That isn’t to say that you can’t have an executable specification for your business logic, just that it possibly shouldn’t be set up as an integration test – perhaps the logic could be tested at multiple levels from the same suite.

How can QA rely on stack scoped integration tests?

These tests are quite often written by a QA cooperating closely with a BA, especially if you are using something like Fitnesse and writing executable specifications. A developer may write some plumbing to get the tests to execute against their code in the best way. Because there is so much involvement from QA, it shouldn’t be difficult for these tests to be trusted.

I think this type of testing applied correctly demonstrates the pinacle of cooperation between BA, QA, and dev; it should always result in great product.

Automated UI Tests

Many user interfaces are browser based, and as such need to be tested in various different browsers and different versions of each browser. Questions like “does the submit button work with good data on all browsers?” are inefficient to answer without automation.

Scope

This is a tricky class of test to scope correctly. Automated UI tests are often brittle and hard to change, so if you have too many it can start to feel like the tests are blocking changes. I tend to scope these to the primary sales pipelines and calls to action: “can you succesfully carry out your business?” – anything more than this tends to quickly become more pain than usefulness. Far more efficient to look at how quickly a small mistake in a less important part of your site/application could be fixed.

This is an important problem to take into consideration when deciding where to place business logic. If you have a web application calling a webservice, business logic can be tested behind the service FAR easier than in the web application.

Who writes these?

I’ve usually seen QA’s writing these, although I have written a handful myself in the past. They tend to get written much later in the development lifecycle than other types of test as they rely on attributes of the actual user interface to work. This is the very characteristic which often makes them brittle, as when the UI changes then they tend to break.

How can QA rely on automated UI tests?

Automated UI tests are probably the most brittle and most expensive tests to write, update, and run. It is one of the most expensive ways to find a bug, unless the bug is a regression issue detected by a pre-existing test (and your UI tests are running frequently). To rely on these tests, they need to be used carefully; just test the few critical journeys through your application which can be tested easily. Don’t test business logic this way, ever. These tests are often sat solely in the QA domain; written by a QA, so trusting them shouldn’t be a problem.

Exploratory Testing

This is the one of the few types of testing which human beings are built for. It’s generally applied to user interfaces and is executed by a QA who tries different ways to break the application either by acting ‘stupid’ or malicious. It simply isn’t practical yet to carry out this kind of testing in an automated fashion; it requires the imagination of an actual person. The intention is to catch problems which were not thought of before the application was built. These might be things which were missed, or they may be a result of confusing UX which couldn’t be forseen without the end result in place.

Who does this?

This is (IMO) the ‘traditional’ QA effort.

User Acceptance Testing

Doesn’t UAT stand for “test everything all over again in a different environment”?

I’ve seen the concept of UAT brutalised by more enterprises than I can count. User acceptance testing is meant to be a last, mostly high level, check to make sure that what has been built is still usable when exposed to ‘normal people’ (aka. the end users).

Things that aren’t UAT:

- Running an entire UI automation suite all over again in a different environment.

- Running pretty much any automation tests (with the possible exception of some core flows).

- Blanket re-running of the tests passing in other environments in a further UAT environment.

If you are versioning your applications and tests in a sensible way, you should know what combinations of versions lead to passing tests before you start UAT. UAT should be exactly what it says on the tin: give it to some users. They’re likely to immediately try to do something no-one has thought about – that’s why we do UAT.

Any new work coming out of UAT will likely not be fixed in that release – don’t rely on UAT to find stuff. If you don’t think your pre-UAT test approach gives sufficient confidence then change your approach. If you feel that your integration environment is too volatile to give reliable test results for features, have another environment with more controls on deployments.

Who runs these tests?

It should really be end users, but often it’s just other QA’s and BA’s. I recommend this not being the same people who have been involved right through the build; although some exposure to the requirements will make life easier.

How can QA rely on User Acceptance Testing?

QA should not rely on UAT. By the time software is in a UAT phase, QA should be ok for whatever has been built to hit production. That doesn’t mean outcomes from UAT are ignored, but the errors found during UAT should be more fundamental gaps in functionality which were either not considered or poorly conceived before a developer ever got involved, or (more often than not) yet more disagreement on the colour of the submit button.

Smoke Testing

Smoke testing originated in the hardware world, where a device would be powered up and if it didn’t ‘smoke’, it had passed the test. Smoke testing in the software world isn’t a huge effort. These are a few, lightweight tests which can confirm that your application deployed properly; slightly more in-depth than a simple healthcheck, but nowhere near as comprehensive as your UI tests.

Who runs these tests?

These should be automated and executed from your deployment platform after each deploy. They give early feedback of likely success or definite failure without having to wait for a full suite of integration tests to run.

How can QA rely on Smoke Testing?

QA don’t rely on smoke tests, these are really more for developers to see fundamental deployment issues early. QA are helped by the early feedback to the developer which doesn’t require them to waste their time trying to test something which won’t even run.

Penetration Testing

Penetration testing is a specific type of exploratory test which requires some specialist knowledge. The intention is to gain access to server and/or data maliciously via the application under test. There are a few automated tools for this, but they only cover some very basic things. This is again better suited to a human with an imagination.

Who runs these tests?

Generally a 3rd party who specialises in penetration testing is bought in to carry out these tests. That isn’t to say that security shouldn’t be considered until then, but keeping up with new attacks and vulnerabilities is a full time profession.

I haven’t yet seen anyone take learnings from penetration testing and turn them into a standard automation suite which can run automatically against new applications of a similar architecture (e.g. most web applications could be tested for the same set of vulnerabilities), but I believe this would be a sensible thing to do; better to avoid the known issues rather than repeatedly fall foul to them and have to spend time rewriting.

How can QA rely on Penetration Testing?

Your QA team will generally not have the expert knowledge to source penetration testing anywhere other than from a 3rd party. The results often impact Ops as well as Dev, so QA are often not directly involved, as they are primarily focussed on the application, not how it sits in the wider enterprise archetcture.

Bringing It All Together

There are so many different ways to test our software and yet I see a lot of enterprises completely ignoring half of them. Even when there is some knowledge of the different classes of test, it’s deemed too difficult to use more than a very limited number of strategies.

I’ve seen software teams write almost no unit tests or developer written integration tests and then hand over stories to a QA team who write endless UI automation tests. Why is this a bad thing? I think people forget that the test pyramid is about where business value is maximised; it isn’t an over-simplification taught to newbies and university students, it reflects something real.

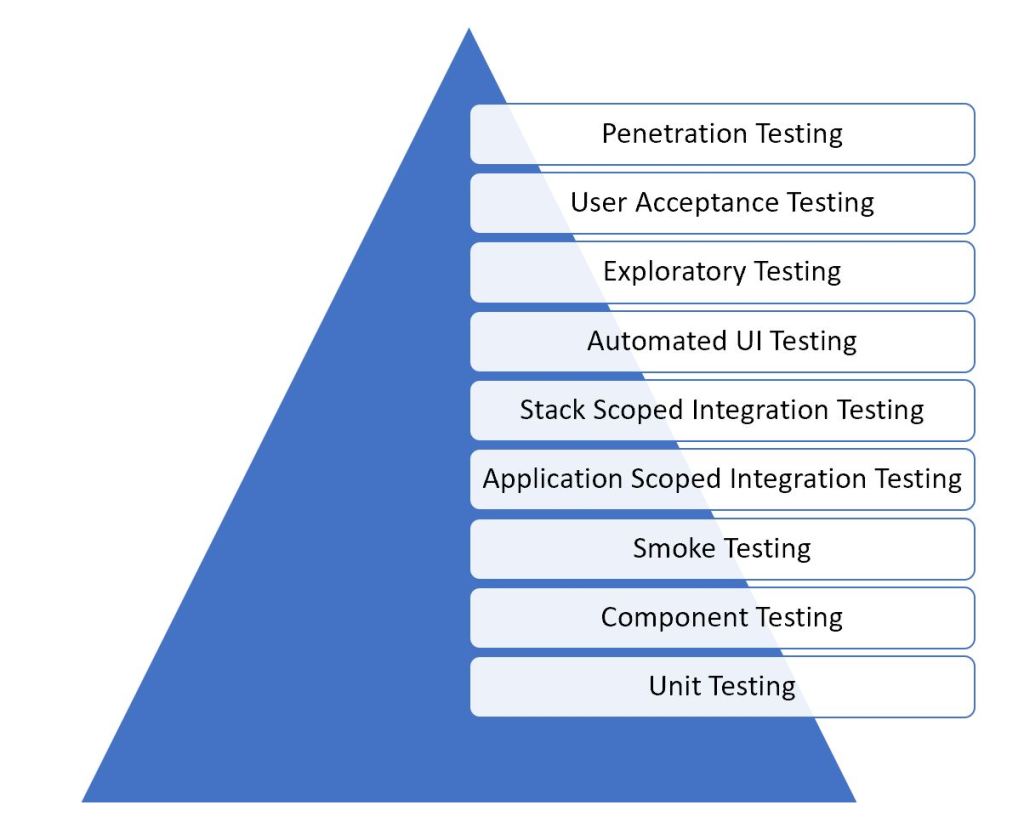

Here is my list of test types in the pyramid:

Notice that I haven’t included Consumer Contracts in my list. This is because Consumer Contracts can be ran at Unit, Integration, or Component levels, so they are cross-cutting in their way.

In case you need reminding: the higher the test type is on the pyramid, the more expensive it is to fix a bug which is discovered there.

The higher levels of the pyramid are often over-inflated because the QA effort is focused on testing, and not on assuring quality. In an environment where a piece of work is ‘thrown over the fence’ to the QA team, there is little trust (or interest) in any efforts the developer might have already gone to in the name of quality. This leads to inefficiently testing endless combinations of request properties of API’s or endless possibilities of paths a user could navigate through a web application.

If the software team can build an environment of trust and collaboration, it becomes easier for QA to work closer with developers and combine efforts for test coverage. Some business logic in an API being hit by a web application could be tested with 100% certainty at the unit level, leaving integration tests to prove the points of integration, and just a handful of UI tests to make sure the application handles any differing responses correctly.

This is only possible with trust and collaboration between QA and Developers.

Distrust and suspicion leads to QA ignoring the absolute proof of a suite of passing tests which fully define the business logic being written.

What does it mean?

Software development is a team effort. Developers need to know how their code will be tested, QA need to know what testing the developer will do, even Architects need to pay attention to the testability of what they design; and if something isn’t working, people need to talk to each other and fix the problem.

Managers of software teams all too often focus on getting everyone to do their bit as well as possible, overlooking the importance of collaborative skills; missing the most important aspect of software delivery.

One thought on “Testing Times”